Arguably, the most important aspect of my job is monitoring data collection. How much data you finally collect can make or break a project. Early on, I made a few mistakes about data collection that I think are worth being shared. For those more experienced, a reminder of the basics never hurts.

Arguably, the most important aspect of my job is monitoring data collection. How much data you finally collect can make or break a project. Early on, I made a few mistakes about data collection that I think are worth being shared. For those more experienced, a reminder of the basics never hurts.

Know where your data is coming from

If you are activating in ten markets, you had better be receiving results from ten markets. This is one area where an updated routing schedule proves useful. Save yourself the headache of tracking down a brand ambassador only to find out the reason he or she didn’t submit results was because there were no events.

The first time, it’s a relief, because at least you aren’t missing data. The tenth is more irritating than a rock in your shoe. Having market-specific quotas also helps you easily do analysis that identifies where a product is performing best, which is a winner come reporting time.

Make sure your data is complete

Similar to the example above, I had an ambassador skipping some survey questions, leaving me with what I perceived as gaps in the data. I later found out that the reason she skipped the question was that she was not allowed to distribute samples at her location. Suddenly, the skipped questions didn’t mean it was as incomplete, and I could have more confidence in my data.

Make sure you are continually getting consistent data

This one was a pain. I had a project that was going swimmingly. We were three weeks away from wrapping up. We had met and exceeded every quota we had set. All that was left was to wait for the end of the program and write the final report. Then the unexpected happened. The client wanted to compare the first half of the program to the second, as they had activated at the same locations numerous times and were curious if they had any impact at that venue level.

This seemed simple enough, until I realized that half of the ambassadors had met the quota in the first five weeks, and then done only intermittent data collection over the next five weeks. That left me floundering. If I had ensure that the data had kept coming in after meeting quota, this entire project would have been a home run. Instead, it had a single, glaring black mark.

So, how do you correct this?

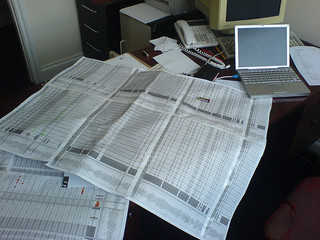

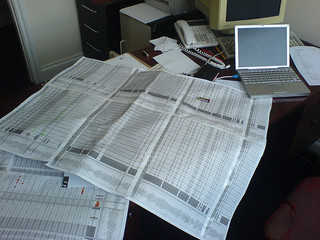

I decided to use a spreadsheet. The spreadsheet had a column for each week, with rows for each quota. Below each quota were the response rates for the most important questions in the survey. It was a bit tedious at first, but it made a world of difference in the end. I had up to date counts for the client at a moment’s notice, and could review all my projects in a singular location.

I’d recommend, at the very least, defining your quotas at the start of every project and establishing an accurate way to measure your progress toward them on a weekly, if not more frequent, basis.

Photo Source: http://farm1.staticflickr.com/61/199568095_b8f6eacd8b_n.jpg